Interesting Engineering: This New Invention Lets You Smell Things Through Electricity Perfecting this idea would enable users to send smells over the internet.

This New Invention Lets You Smell Things Through Electricity

Perfecting this idea would enable users to send smells over the internet.

The idea of having a real-time change in smell during immersive experiences watching movies isn’t new. We can trace such an attempt into 1959 where a technology called AromaRama was used to send across smells across to the audience.

The benefit is increased engagement as people would get the smell of flowers when a scene revolves around a garden or have the scent of smoke during sequences that pertain to it like wars or bomb explosions. Needless to say, the technology didn’t gain much traction.

The age where we can induce smell electrically!

In 2018, we are capable of a much efficient method that could get us the same results. The researchers at the Imagineering Institute in Malaysia have found a new method that could help a person smell an occasion, and they plan to use it in AR and VR based applications.

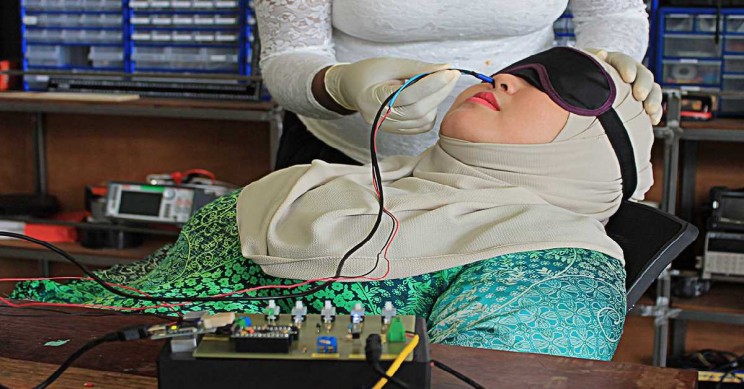

Imagine where you could get a sense of smell through mixed reality experiences. The researchers are calling this Digital Smell. Currently, the researchers have managed to do this by bringing in thin electrodes in contact with the inner lining of the human nose.

Yes, the current version requires two wires to be inserted into your nostrils.

That said the researchers are working on creating a smaller form factor of this technology so that it can be easily carried and used. The idea for such an invention comes from Kasun Karunanayaka, who went on with this innovation as a project to acquiring his Ph.D. with Adrian Cheok, who is now serving as the director of the institute.

He is also gunning for similar innovation, as his dream is to create a multisensory internet.

Much tinkering is needed to create a near perfect form factor

The first version of the project involved chemical cartridges that mix and release chemicals to produce odors. But this was not what the team wanted moving forwards. They wanted to create a system that can produce scent through electricity alone.

The team also collaborates with a Japanese startup called Scentee to develop a smartphone gadget that can produce smells based on user inputs.

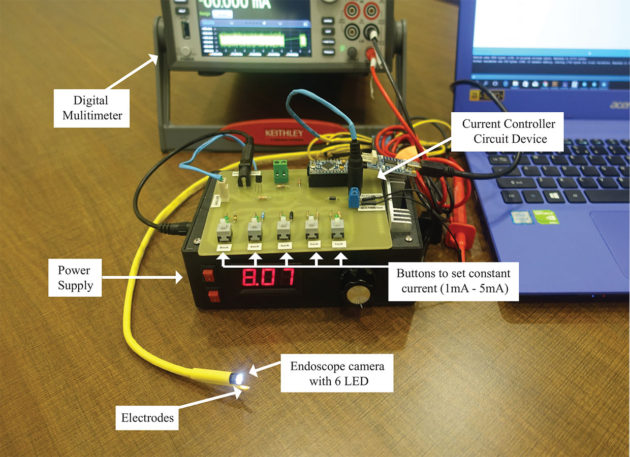

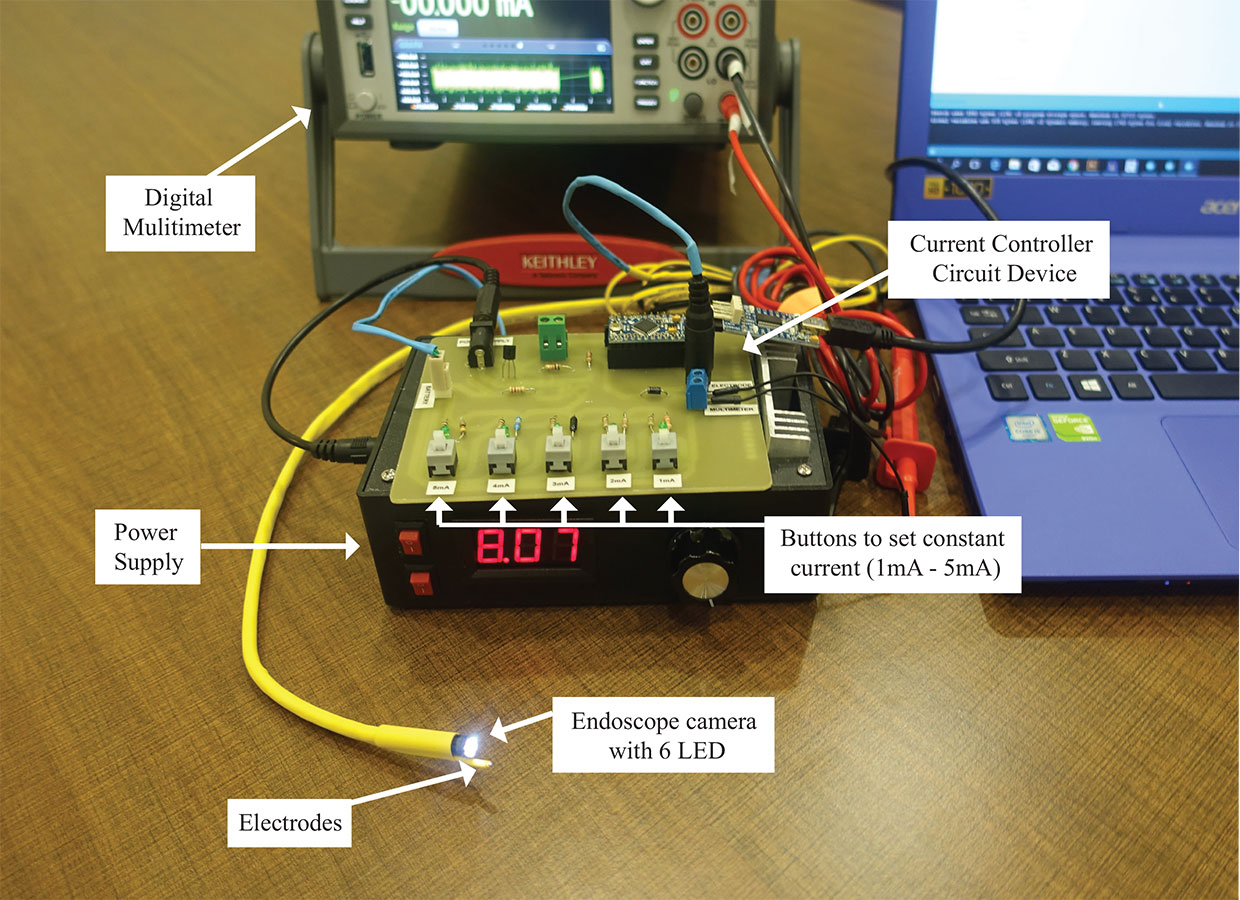

To create an all-electric system, the team experimented with exciting the human neurons. The test requires a wire to be inserted into their nose. When the exposed silver tip touches the olfactory epithelium, which is located approximately 3 inches into the nasal cavity, the researchers will send an electric charge into them.

“We’ll see which areas in the brain are activated in each condition, and then compare the two patterns of activity,” Karunanayaka said. “Are they activating the same areas of the brain?” If so, that brain region could become the target for future research.”

The researchers varied both amperage and frequency of the current to see the smell sensations that they would create. For certain electric combinations, the perceived smells were off fruity or chemical in nature.