Five things you’ll one day be able to do via the internet

By Clare McDonald on November 22, 2013 3:37 PM

After a very surreal chat with a professor at City University London, I was filled in on the concept of multi-sensory human communication. It’s a bit of a mouthful, but Adrian David Cheok, professor of pervasive computing at City University, explained that in the future, the internet will allow communication that goes beyond just vision and hearing. He thinks that in the future we’ll go from sharing data to sharing “experience”.

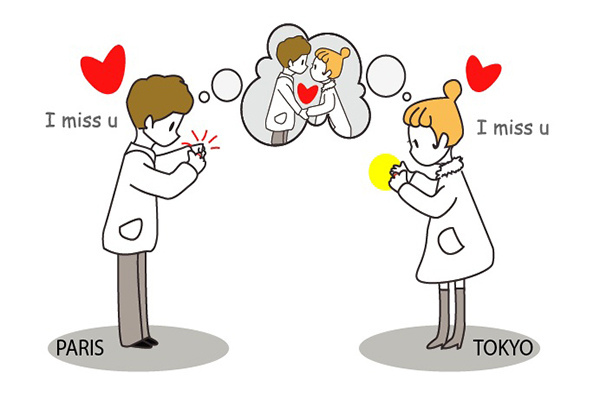

After our chat, I felt full of hope about what the future would hold. A lot of companies now expect employees to travel abroad, and everyone knows the strain that can have on families and individuals when they can’t properly communicate with those at home. But what if we could taste, smell, hug and kiss via the internet? Professor Cheok explained that 60% of human communication is non-verbal, so although long-distance communication has come a long way, it still doesn’t suffice. Here are some of his ideas and projects for interactive technology in the future:

Touch

Sometimes you might have to go for a conference and your partner is at home, in another country, or in another time zone. You have a wandering thought about them and you wished they knew they were on your mind. The RingU was invented for this purpose, a device that allows users to send ‘bi-directional’ visual and physical messages to another paired ring. You can send vibrations and colours to represent your mood and thoughts. It’s not quite the same as being there in person or a phone call, but sometimes you want to send a quick gesture just to let someone know you’re thinking of them. It could even be used in business environments, for example there has been interest in the ring from financial firms who think it would be useful to help traders to receive real-time updates on the stock exchange. The ring could vibrate to inform them of a movement in the stocks they follow.

Taste

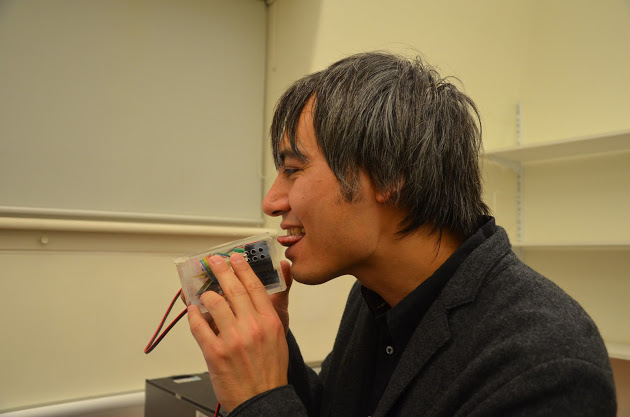

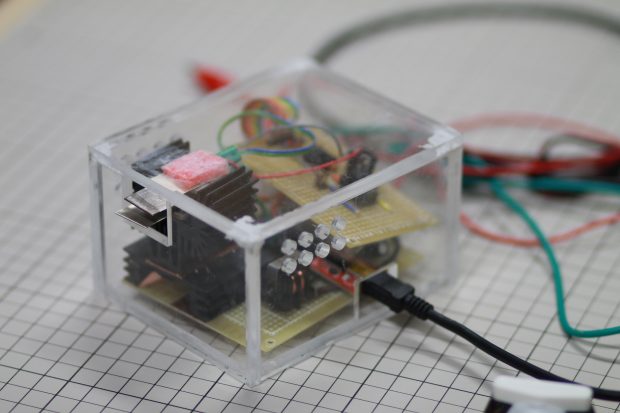

A lot of current scientific research surrounding taste involves using a mixture of chemicals to produce different taste sensations. Professor Cheok has created a device that uses electrical signals sent to the tongue to manipulate the brain into thinking it can taste certain things. The hope for this is that in the future, taste could be digitised so that people could share what they are eating via the internet. It could also be attached to eating utensils to change the way things taste as you eat them. Imagine eating a virtual lollipop; all the taste and no calories. This research is also linked with directly manipulating sensors in the brain to produce the sensation of taste or smell, similar to a technique already being used to treat depression.

Smell

Surprisingly this is something that is currently being produced, and is called ChatPerf. There’s a small device that plugs into your smartphone which can then emit a smell through a mixture of chemicals. If you wanted to share a food smell with someone, you could text it to them. You could sync it with your alarm clock to spray a coffee smell to get you going in the morning. There has even been the idea that you could use it to help you power through a diet, as smell and taste are so closely associated; you can spray the smell of beef to make your salad taste better. It could also be used for healthcare, using familiar smells to trigger memories for elderly patients, reminding them to do things such as take medication. Or it could be used in marketing to promote products such as fabric softener or deodorant.

Kiss

Cheok is currently working on a ‘bi-directional kiss messenger’ to simulate kissing via the internet. If you’re away from home for a long time, maybe you’re a jet setter, you can use these devices to call home and maybe even get a little kiss from your partner. You each plug the device into your smartphone, and the silicone pads simulate the movement of your partner’s mouth and lips.

Hug

Travelling for work can be really difficult, especially if you have kids (or cats) at home who don’t fully understand why you have to be away so often. A prototype device allowed a person to touch sensors on a doll which then transferred the pressure to a jacket on their pet. To widen this interaction to human communication, a ‘hugging pyjama’ was invented to allow the same interaction to take place between a parent and their child over long distance. This device allows you to hug someone at home from wherever you are in the world. Using pressure sensors, the jacket can apply pressure to the body in reaction to where you touch, giving the illusion that you are hugging them.

For more of Professor Cheok’s work, visit his website.

Such is the need for IT professionals with particular skills that the

Such is the need for IT professionals with particular skills that the