![]()

By Ellie Buchdahl, 9 June 2014

Nothing says ‘welcome home’ like the warm smell of freshly baked bread, does it? And nothing says ‘I love you’ better than a big, tight squeeze from a friend.

So wouldn’t it be great to be able to send hugs and scented wishes across the world as easily as a phone call, a text message or an email?

Thanks to work involving students at City University in London, now you can.

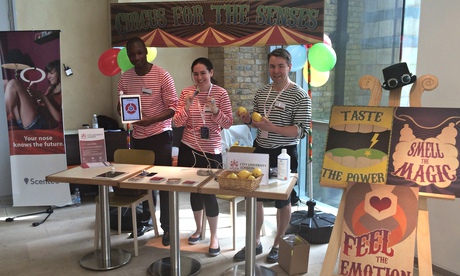

Visitors to the Multi-Sensory Internet stand at the Natural History Museum during Universities Week will be able to try on a ring that gives them a ‘remote hug’, get a shot of flavour from a ‘digital lollipop’, or sniff a ‘Scentee’ device – a phone that transmits smells including strawberry, lavender and coffee.

Academic, apprentice and entrepreneur

Jordan Tewell, from Ohio in the USA, is on a team of international and UK students working with entrepreneur Adrian David Cheok, Professor of Pervasive Computing at City University, to create these ‘sense-sending’ devices.

Jordan’s PhD in Computer Science sounds more like a full-blown apprenticeship in entrepreneurism and networking than a programming project.

‘My day-to-day work really depends on Adrian’s schedule,’ Jordan says.

‘Usually we’ll run some tests, experiments or demonstrations in the lab, but we have regular outings to events at The Hangout, which is a space set up between the university and local entrepreneurs from London’s Tech City to let students learn entrepreneurship skills and give them a chance to attract investment in their ideas.

‘I also go along with Adrian to companies to make professional pitches for products he’s marketing.

‘We recently took the Scentee to a major multinational consumer products company, and they’re going to use it in a promotional campaign for antiperspirant.’

Professor Cheok and his gadgets are well known in the advertising world.

He has worked with the Michelin-starred restaurant Mugaritz in Spain to develop a mobile app that recreates some of chef Andoni Luis Aduriz’s top dishes. He also worked with a Japanese company to produce a campaign in the USA with meat manufacturer Oscar Mayer, developing a mobile phone ‘alarm clock’ that wakes people with the smell and sound of bacon cooking via the Scentee.

But the input of the PhD students at City is invaluable to his work.

‘My background was computer science,’ says Jordan. ‘Adrian is a real gadget guru, but I’ve brought in my skill set in programming.’

International experience

‘One of the best things about doing this project is working with an interdisciplinary team,’ Jordan adds.

‘There are businessmen, designers in other technical areas, programmers – and it’s incredibly international, too, which was another reason why I wanted to come to the UK.’

One of Jordan’s jobs is to convert the engineer’s perspective of technical drawings and product requirements into simpler picture guides.

These are used by designers based in Japan, and Jordan’s task involves negotiating a language barrier.

‘It’s one of the realities of the industry, and I’m now used to boiling down every nook and cranny of what I do into a format that someone who doesn’t speak excellent English can understand,’ he says. ‘It’s prepared me for anything down the road.’

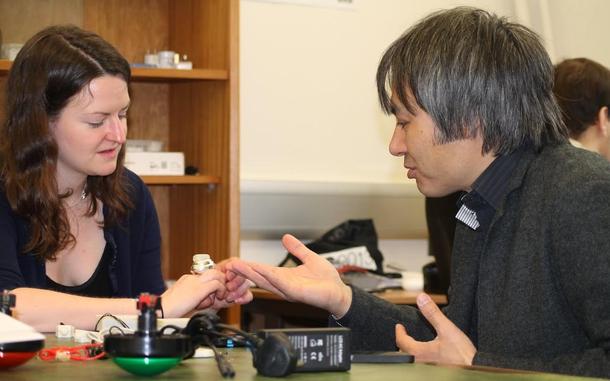

So how do these virtual tastes actually work?

It’s all down to chemicals and currents, apparently.

The Scentee is loaded with reservoirs of alcohol-based solutions that mimic ‘coffee’ or ‘roses’, for example, but that linger longer than the ‘real’ smells would.

Other devices, such as a taste radio, have an apparatus that can be placed in the mouth to stimulate sweet or salt-detecting neurons on the tongue using harmless bursts of electricity.

The research that underpins these devices could have practical medical applications as well as commercial ones, such as ‘recreating’ taste for people with mouth or tongue disorders, or inducing sweet tastes for people with diabetes.

With studies showing that taste and memory are intrinsically linked, there is also potential for memory-aiding devices that go beyond the verbal and visual – to prompt people with Alzheimer’s disease to take medication, for example.

‘This is creating a whole language of taste that can be used in a scientific way, and it’s opening up a completely new area of molecular gastronomy,’ Jordan says. ‘We could even create tastes that you wouldn’t experience in the real world.

‘We are working on cutting-edge stuff here, and in a couple of years we could be building businesses out of it,’ Jordan adds.

‘This is a great opportunity – and a real chance to network and show the world what we’ve created.’

Professor Cheok, from City’s School of Mathematics, Computer Science & Engineering, is the founder and director of the

Professor Cheok, from City’s School of Mathematics, Computer Science & Engineering, is the founder and director of the

The RSA (Royal Society for the encouragement of Arts, Manufactures and Commerce) is an enlightenment organisation which is committed to finding innovative practical solutions to today’s social challenges. Through its ideas, research and 27,000-strong Fellowship, it seeks to understand and enhance human capability so we can close the gap between today’s reality and people’s hopes for a better world. Based in London and founded in 1754, the RSA was granted a Royal Charter in 1847 and the right to use the term Royal in its name by King Edward VII in 1908.

The RSA (Royal Society for the encouragement of Arts, Manufactures and Commerce) is an enlightenment organisation which is committed to finding innovative practical solutions to today’s social challenges. Through its ideas, research and 27,000-strong Fellowship, it seeks to understand and enhance human capability so we can close the gap between today’s reality and people’s hopes for a better world. Based in London and founded in 1754, the RSA was granted a Royal Charter in 1847 and the right to use the term Royal in its name by King Edward VII in 1908.